Unfortunately, it couldn’t be all play, all the time in Atlanta. We had a full day of work ahead of us to set the record straight on a few items.

Does Octane deserve to be considered one of the nation’s best coffee establishments? Yes. Especially when paired with a popover from the adjoining Little Tart Bakeshop in the Grant Park location.

Will Rosebud provide a superlative experience for the discerning brunch enthusiast? We started with fried cheese grits with smoked cheddar and pepper jelly. Need I go on?

Can Porter Beer Bar live up to its reputation as one of Atlanta’s best destinations for enjoying a wide variety of amazing beer? Early results are extremely promising, but from a due diligence standpoint, we feel that it would be imprudent to end our investigation prematurely.

“Atlanta” is a flexible concept

Refreshed and recharged, and in gratitude to Atlanta’s gracious hospitality, we thought it was only right that we do our part to assist them with their zombie problem. Atlanta Zombie Apocalypse promises an interesting variant on the standard haunted attraction. Unlike NetherWorld, which was incongruously located a few blocks from suburban strip malls, AZA is convincingly nestled in the woods off a deserted stretch of highway. The attraction is built on and around the grounds of a deserted motel and it didn’t take much effort to believe that zombies might be nearby.

For those who don’t frequent this type of entertainment, the haunted attraction industry is all grown up and embraces more than what we endearingly referred to as a “haunted house” back in the day. There are a growing number of variants on the theme of scaring people for fun and profit, including haunted mazes, haunted trails, scare zones, haunted hayrides, etc. Many attractions aggregate and even mashup several of these styles to make sure that you don’t get too comfortable. AZA provides three attractions which can be generally described as 1) haunted house/haunted trail mashup, 2) haunted maze + paintball and 3) haunted house/immersive theatre.

Out of respect for the organizers, I won’t give up any spoilers, but I will share some general impressions. Admission can be purchased on a per attraction basis. I was here from California and was in the middle of nowhere, GA. I was going to all three.

Out of respect for the organizers, I won’t give up any spoilers, but I will share some general impressions. Admission can be purchased on a per attraction basis. I was here from California and was in the middle of nowhere, GA. I was going to all three.

Although the attractions can be visited on a standalone basis, some of the employees had specific ideas on the order in which to experience them if you were seeing more than one. They were essentially imposing a meta-level of story and emotional engagement that hadn’t actually been accounted for in the overall design of the attraction. The best part is: different people had different opinions on the ideal order, and they were pretty passionate about their individual assessments. It was like having a personal team of horror sommeliers. More importantly, it was unscripted proof that the tendency to find story in our lives is central to the human experience. It also echoed my thoughts on the very personalized reactions that one can expect from immersive experiences.

Unlike many haunted attractions, which are essentially self-directed (i.e., here’s the entrance, proceed to the exit,) each of these attractions had a guided component. While the most memorable features of many attractions are the live performers, the level of interactivity is typically low and unidirectional: they scare, you scream, keep moving. The guides and performers at AZA added a sense of theatricality and interactivity which enhanced the the overall immersive quality of the experience. The first attraction we chose was called “The Curse,” and it took advantage of the ample, wild surroundings by using parts of the neighboring forest as the set. There was a backstory and a mystery to the attraction, and we were recruited as investigators and addressed directly by the guides and performers. Although I saw room for improvement, I was certainly entertained.

The blogger in the line of duty

For me, the main attraction, and the reason I found myself in Conley, GA deferring my important research of Atlanta’s brewpubs, was the “Zombie Shoot.” We were provided with a semi-automatic AirSoft gun (essentially paintball, but using specialized BBs instead of paintballs) and protective headgear, and were told, “Aim for the head or the chest. Keep firing until they go down.” Pretty much the exact opposite of your standard preshow advisement, “Keep your arms and legs inside the vehicle at all times.” Also, in case it’s not totally clear, you’re firing at real people, I mean zombies, not cardboard cut-outs or animatronics. Did it work? Mostly. Was it fun? Definitely.

Maybe Shakespeare actually IS the future of haunted attractions

The final attraction was called “?” and I think it’s telling that it might have been my favorite even though on its surface, it seemed to be a standard haunted house. Why? Story. It was the most theatrical of the three and featured the most interesting and inventive story, while still remaining focused on trying to scare you out of the building. Granted, we’re not talking Shakespeare, but it was a refreshing and successful variant on what could have been an uninspired production.

The production value in these attractions was not award-winning, and the storytelling was not expert, but I’m still glad I checked them out. It was all produced with a lot of heart, and everybody from the people selling the tickets to the performers and guides and zombies seemed to be having a good time and wanted to make sure that we were too. By providing three very different, hybridized attractions, it was clear that the creators were willing to take some risks and try out some new ideas, which will always impress me more than playing it safe.

Crossing the line from a one-way performance to an interactive experience can immediately raise many expectations that are potentially difficult to meet. The biggest challenge becomes the reconciliation between the audience’s sense of agency and the practical and aesthetic parameters of structured entertainment. It’s a tricky balancing act between living an experience and playing along. To choose an extreme yet practical example from this show, being provided with a gun and an opportunity to fend off assailants is real and pulls you right into the experience. However, remaining cognizant of where you can and can’t shoot can temporarily pull you out. It trades the steady equilibrium of an evenly immersive but passive experience for one which attempts to balance instances of deep immersion within a more structured rules-based framework. The resulting dynamics suggest a hybrid with gaming, sports, and other experiences that would otherwise seem at odds with what (in this case) is essentially theater. Personally, I think that finding a satisfying balance in these intersecting sensibilities is a challenge worth taking on, and that we’re hopefully just scratching the surface of the types of experiences we can expect to enjoy in the future.

Follow @thememelab

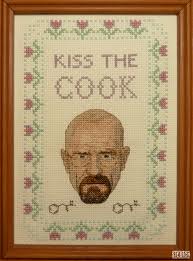

Let me just cut to the chase. Breaking Bad is an intense show. It has successfully navigated the murky waters of long-format television to create an epic story that has momentum and feels real without getting

Let me just cut to the chase. Breaking Bad is an intense show. It has successfully navigated the murky waters of long-format television to create an epic story that has momentum and feels real without getting  Before you write me off as a Luddite, (actually a pretty audacious proposition if you’ve read any of my other posts) I admit that the concept of second screen might have its place. I’m an unabashedly huge fan of

Before you write me off as a Luddite, (actually a pretty audacious proposition if you’ve read any of my other posts) I admit that the concept of second screen might have its place. I’m an unabashedly huge fan of